The data size limit for a batch ingestion command is 6 GB. By default, the maximum batching value is 5 minutes, 1000 items, or a total size of 1 GB. The ingestion batching policy can be set on databases or tables. Small batches of data are then merged, and optimized for fast query results. Data is batched according to ingestion properties. This method is the preferred and most performant type of ingestion. Other actions, such as query, may require database admin, database user, or table admin permissions.īatching ingestion does data batching and is optimized for high ingestion throughput.

Permissions: To ingest data, the process requires database ingestor level permissions. Ingestion properties: The properties that affect how the data will be ingested (for example, tagging, mapping, creation time). Supported data formats: The data formats that Azure Data Explorer can understand and ingest natively (for example Parquet, JSON) Supported data formats, properties, and permissions The Data Manager then commits the data ingest into the engine, where it's available for query. Data is persisted in storage according to the set retention policy. Further data manipulation includes matching schema, organizing, indexing, encoding, and compressing the data. Azure Data Explorer validates initial data and converts data formats where necessary. Batch data flowing to the same database and table is optimized for ingestion throughput. Data is batched or streamed to the Data Manager. The Azure Data Explorer data management service, which is responsible for data ingestion, implements the following process:Īzure Data Explorer pulls data from an external source and reads requests from a pending Azure queue. The diagram below shows the end-to-end flow for working in Azure Data Explorer and shows different ingestion methods. Once ingested, the data becomes available for query. Select Select Folder to start the download of a file to the local location.Data ingestion is the process used to load data records from one or more sources into a table in Azure Data Explorer. A file dialog opens and provides you with the ability to enter a file name. To download files by using Azure Storage Explorer, with a file selected, select Download from the ribbon. The main pane shows a list of the blobs in the selected directory. In the Azure Storage Explorer application, select a directory under a storage account. When the upload is complete, the results are shown in the Activities window. When you select Upload, the files selected are queued, and each file is uploaded. This operation gives you the option to upload a folder or a file. On the directory ribbon, choose the Upload button. After the directory has been successfully created, it appears in the editor window. When complete, press Enter to create the directory. In the container ribbon, choose the New Folder button. To create a directory, select the container that you created in the proceeding step. After the container has been successfully created, it's displayed under the Blob Containers folder for the selected storage account. When complete, press Enter to create the container. See the Create a container section for a list of rules and restrictions on naming containers. Alternatively, you can select Blob Containers, then select Create Blob Container in the Actions pane.Įnter the name for your container. Select Blob Containers, right-click, and select Create Blob Container. To create one, expand the storage account you created in the proceeding step. This view gives you insight to all of your Azure storage accounts as well as local storage configured through the Azurite storage emulator or Azure Stack environments.Ī container holds directories and files.

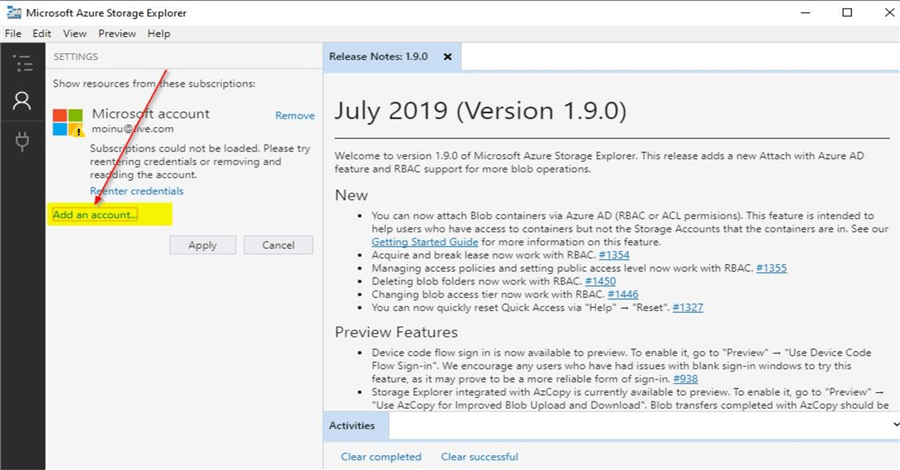

When it completes connecting, Azure Storage Explorer loads with the Explorer tab shown. Select the Azure subscriptions that you want to work with, and then select Open Explorer. Storage Explorer opens a webpage for you to sign in.Īfter you successfully sign in with an Azure account, the account and the Azure subscriptions associated with that account appear under ACCOUNT MANAGEMENT. You can sign in to global Azure, a national cloud or an Azure Stack instance. In the Select Azure Environment panel, select an Azure environment to sign in to. In the Select Resource panel, select Subscription. While Storage Explorer provides several ways to connect to storage accounts, only one way is currently supported for managing ACLs. When you first start Storage Explorer, the Microsoft Azure Storage Explorer - Connect to Azure Storage window appears. If access to Azure Data Lake Storage Gen2 is configured using private endpoints, ensure that two private endpoints are created for the storage account: one with the target sub-resource blob and the other with the target sub-resource dfs. Storage Explorer makes use of both the Blob (blob) & Data Lake Storage Gen2 (dfs) endpoints when working with Azure Data Lake Storage Gen2.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed